Indexes Don’t Make Databases Fast

What I learned the hard way is simple. Most read-scaling pain starts with the wrong lookup path, not the wrong database.

If indexing still feels fuzzy, that is not because you are missing something. It is because most explanations either stop at “B-tree fast” or vanish into storage-engine trivia. To be clear, indexing is simpler and harsher than that. You pay extra storage and extra write work so reads stop crawling through data like they are looking for a lost sock.

Table of contents

Why indexing exists at all

What really happens without it and with it

The index shapes that matter in practice

Balanced tree

Exact-match hash

Append-first write path

Geo path

Text path

How to choose the right shape for the query you actually have

Composite

Covering

Partial

Expression-based

What changes from local product app to global platform

Where people get fooled

lookup speed

write drag

cross-region freshness

schema drift

FAQ

System Design

To Summarize

What indexing really is

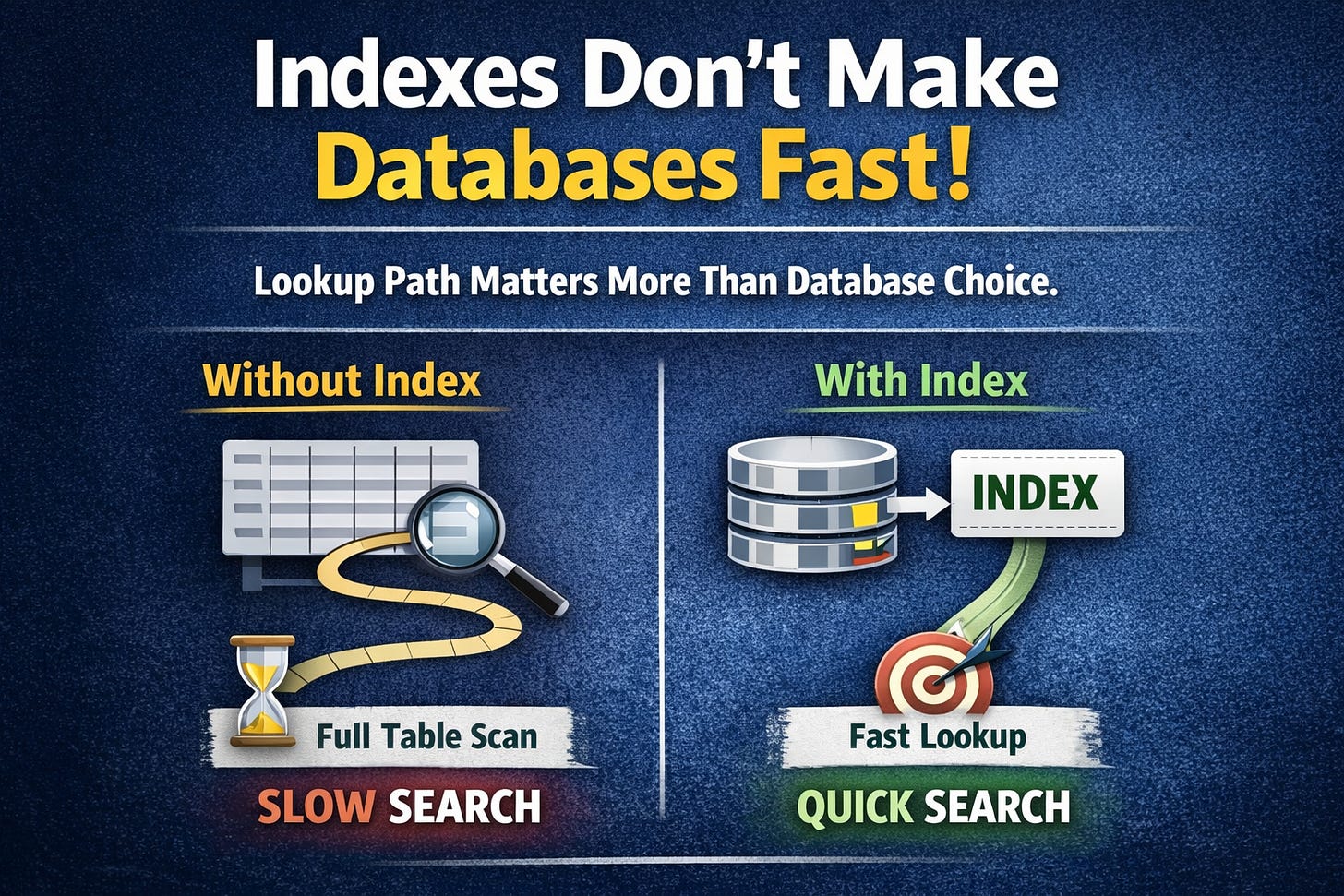

Indexing is a second structure that helps the engine find rows fast without reading the whole table.

The table stores the truth.

The index stores the path to the truth.

In other words, the table is the warehouse and the index is the aisle map. (PostgreSQL)

What happens without it

The engine starts at row 1 and keeps reading until it finds what matches.

As the table grows, the cost grows with it.

Even on SSDs, touching a lot of pages you did not need is still a tax, just a smaller tax than it used to be.

What happens with it

The engine walks the index structure first.

It finds the row location or primary key.

Then it fetches only the target rows it actually needs.

Example

Query

SELECT id, email, status FROM users WHERE email = 'jimmy@x.com'

Without index

full scan

lots of wasted reads

latency grows with table size

With index

targeted lookup

far fewer page reads

latency stays sane much longer

I would recommend saying it like this

“An index is a paid shortcut. We pay on writes and storage so reads stop wandering.” (PostgreSQL)

What this does not mean

It does not fix bad schema.

It does not fix bad query shape.

It does not fix stale cross-region reads.

It does not remove the base-table fetch unless the query can be satisfied from the index alone.

The clean correction

First fix the read path.

Then decide whether indexing is enough or whether you need caching, denormalization, search, or a different storage engine.

An 80M-user login lookup drifts from clean to clumsy and pushes p95 from 30 ms to 1.4 s

Before

Product hears “login is slow.”

Junior hears “add cache.”

Senior hears “show me the query.”

Staff hears “show me

EXPLAIN.”Principal hears “what is the exact read path and where is the waste.”

After

Add the lookup index on the exact filter column.

The query stops dragging cold pages into memory.

CPU drops, IO drops, p95 drops.

I would recommend

Fix the access path before buying bigger machines or spraying cache on a table scan. (MySQL Developer Zone)

[Indexing - the plain version]

[Client query]

|

v

[Query planner]

|

+--> [No useful index]

| |

| v

| [Scan table pages]

| |

| v

| [Check rows one by one]

| |

| v

| [Filter + sort]

| |

| v

| [Result]

|

+--> [Useful index exists]

|

v

[Walk index pages]

|

v

[Find row pointer or PK]

|

v

[Fetch target row]

|

v

[Result]

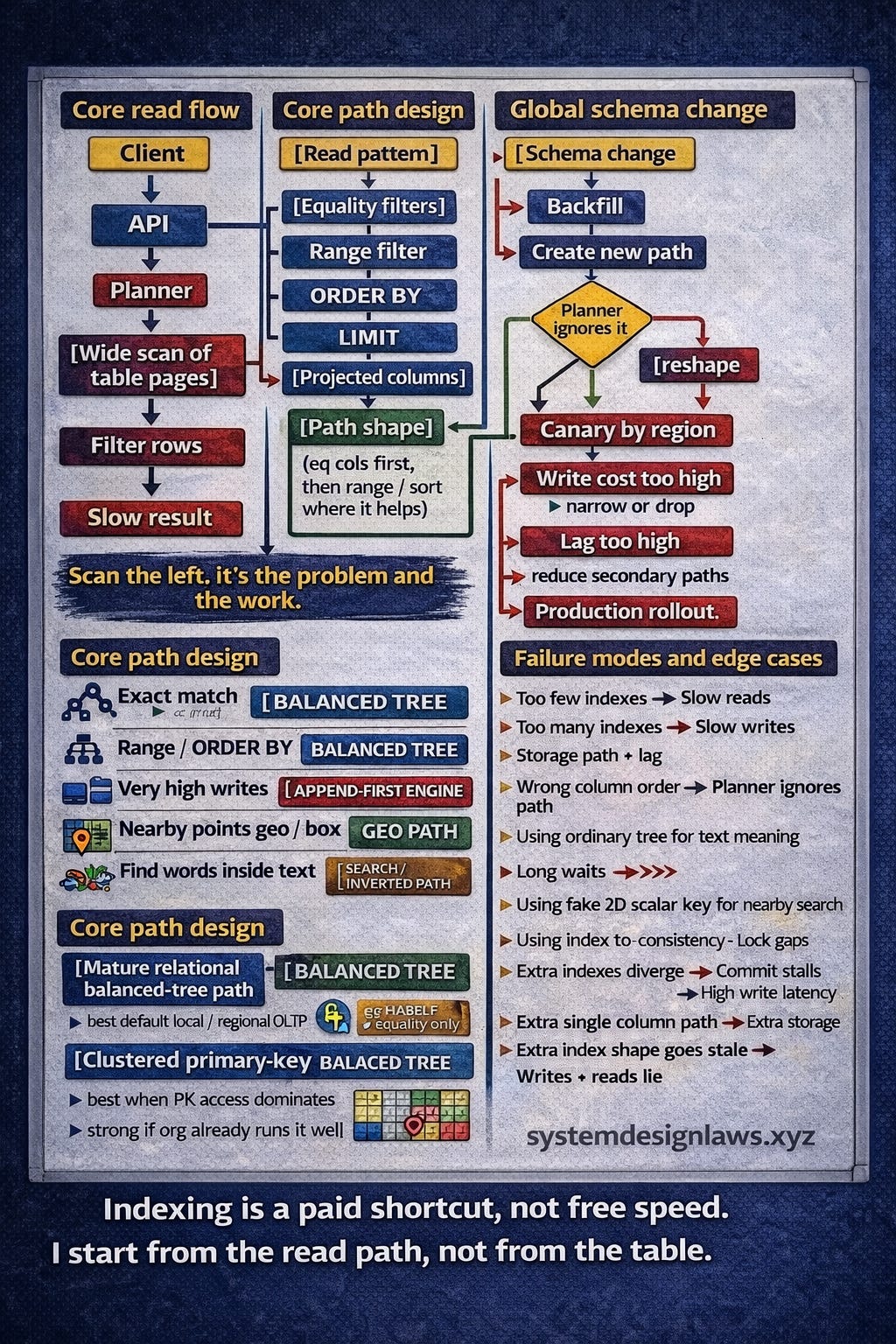

The shapes that matter

Most teams do not need every exotic option.

Most teams need to understand 5 shapes and stop pretending all read problems are the same.

Balanced tree

This is the default answer most of the time.

It is good for

exact match

range

prefix

ORDER BY

Why it keeps winning

it stays sorted

it stays balanced

it works well for mixed read-write workloads

it is the default in mainstream relational engines for a reason (PostgreSQL)

What this does not mean

It is not the best answer for text meaning, moving geo points, or relentless write firehoses.

The clean correction

Use it when the query is structured, transactional, and repeatable.

Exact-match hash

Good for equality only.

Bad for range and sort.

This is the one-trick specialist.

In practice, it shows up less than people think because the balanced tree is already strong enough for many equality lookups and much more flexible beyond that. (PostgreSQL)

What this does not mean

Faster on paper does not mean better in production.

The clean correction

Use it only when equality is the whole game and nothing else matters.

Append-first write path

This is where write-heavy systems stop behaving like ordinary OLTP tables.

New writes land in memory and an append log first.

Data flushes to immutable disk files later.

Background compaction merges files and removes junk.

Bloom filters help skip files that definitely do not contain the key.

Reads get more complex because the engine may need to check memory plus multiple disk structures.

This trade exists because random in-place updates are expensive when writes are relentless. (Apache Cassandra)

What this does not mean

This is not “just another index type.”

The clean correction

This is a storage-engine choice for write-heavy systems like logs, metrics, clickstream, and large event pipes.

Geo path

Nearby search is not 2 independent 1D filters pretending to be 2D geometry.

A naive

(lat, lng)path can still drag in a huge candidate band or box and then filter later.Better geo paths cluster nearby points first, then apply true-distance filtering at the end.

In-memory geo indexes are often the practical answer when points move frequently. (Redis)

What this does not mean

A composite latitude-longitude index is not the same thing as a real proximity index.

The clean correction

Use a geo-aware path when the product says “near me,” “within 5 miles,” or “closest driver.”

Text path

Once the question becomes “find this word anywhere inside text,” normal structured lookup logic is the wrong shape.

Full-text wants a reverse map from terms to documents.

Tokenization, lowercasing, stop-word removal, stemming, postings lists, and ranking all show up here.

This is why text retrieval lives differently from ordinary equality and range queries. (Google Cloud Documentation)

What this does not mean

LIKE '%term%'is not a grown-up search strategy at scale.

The clean correction

Use a search-style index when the product cares about terms, language, relevance, or phrase matching.

A delivery platform tracks 12M moving points, writes location every few seconds, and “nearby” gets slower exactly when city traffic gets worse

Before

Team forces moving coordinates into a rigid disk-oriented path.

Re-index work rises.

Freshness falls.

Nearby search turns stale under load.

After

Use an in-memory geo path.

Query the center cell plus neighbors.

Filter by true distance at the end.

I would recommend

For constantly moving locations, choose write-friendly geo indexing first or the product starts lying with a straight face. (Redis)

[Index families - what problem they are solving]

[Read pattern]

|

+--> [Exact match on scalar]

| |

| +--> [Balanced tree]

| |

| +--> [Hash if equality only]

|

+--> [Range or ORDER BY]

| |

| +--> [Balanced tree]

|

+--> [Very high write ingestion]

| |

| +--> [Append-first engine]

|

+--> [Nearby points / radius / box]

| |

| +--> [Geo path]

|

+--> [Find words inside text]

|

+--> [Search / inverted path]

How to choose the shape from the query you actually have

Start from the query, not the table.

Ask

What do I filter on every time?

What do I sort on every time?

What do I return every time?

How fresh does the product actually need this result to be?

Then shape the structure around that path.

Composite

If the query repeats the same multi-column filter and sort, combine those columns in 1 ordered structure.

Example

Query

SELECT order_id, total, created_at FROM orders WHERE customer_id = ? AND status = 'paid' ORDER BY created_at DESC LIMIT 20

Better shape

(customer_id, status, created_at DESC)

Why it works

equality filters first

sort key after

small limit

the engine narrows fast, then returns rows already in the right order

Column order matters.

Prefix matters.

“We indexed all the columns” is not the same as “the query can use the index well.” (PostgreSQL)

What this does not mean

More columns is not automatically better.

The clean correction

Put the repeated equality filters first, then the range or sort dimension that actually matters.

Covering

If the query needs only columns already present in the index, the engine may skip the base-table lookup.

That can be a big win on hot reads with small result payloads.

It can also bloat the index if you get greedy and shove too much into it.

Relational engines support this in different ways, including index-only scans or covering behavior. (PostgreSQL)

What this does not mean

Every hot query deserves a fat covering index.

The clean correction

Use it where the read volume is huge and the projected columns stay small.

Partial

If the hot query only touches a small, repeated slice of rows, index only that slice.

Good examples

only open orders

only failed jobs

only active subscriptions

This keeps the structure smaller and cheaper while directly matching the hot filter. (PostgreSQL)

What this does not mean

Partial is not clever by default.

The clean correction

Use it only when the hot predicate is stable and repeated.

Expression-based

If the query repeatedly computes the same expression, store the retrieval path on that expression.

Good examples

lowercased email

normalized phone

extracted domain

date bucket

What this does not mean

Derived expressions do not save a bad data model.

The clean correction

Use them when the expression is stable, repeated, and product-visible.

Technology fit from local product to global platform

Local to regional product app

balanced read-write workload

predictable relational access

feature set matters

I would recommend the mature relational balanced-tree path

Existing primary-key-heavy stack

most reads are PK-based

operational familiarity matters

I would recommend the clustered primary-key path

Global transactional platform

fresh reads matter across regions

secondary lookup on non-key columns matters too

I would recommend the globally consistent transactional path

Firehose ingest

logs, metrics, clickstream, IoT, audit, telemetry

I would recommend the append-first write-optimized path

To be clear

lookup speed and global freshness are different problems

indexing helps with the first

replication and transaction semantics decide the second (Google Cloud Documentation)

A payout system adds payment_state_v2 mid-flight, Tokyo writes first, Dublin retries second, Virginia reads now, and schema drift turns simple lookups into archaeology while p99 misses 150 ms

Before

New field lands.

Old lookup path still reflects old reality.

Queries slow down or miss the hot path entirely.

Product thinks data is wrong when the path to data is wrong.

After

Add schema.

Backfill safely.

Build the new structure.

Verify with the plan.

Canary by region.

Watch write cost and lag.

I would recommend

Separate 2 questions every time

how fast do I find it

how fresh must it be

The first is a lookup-path question.

The second is a consistency question. (Google Cloud Documentation)

[How to shape the path from the hot query]

[API request]

|

v

[Query pattern]

customer_id = ?

status = 'paid'

ORDER BY created_at DESC

LIMIT 20

|

v

[Design choice]

Equality filters first

Sort key after

Small result set

|

v

[Index shape]

(customer_id, status, created_at DESC)

|

v

[Planner]

|

v

[Walk to customer + status slice]

|

v

[Rows already ordered by time]

|

v

[Return top 20]

Why it matters

Because most read pain starts as lookup-path waste, not hardware weakness.

Because every extra structure taxes writes, storage, maintenance, and rollout risk.

Because text, geo, and write firehoses are not side quests. They are different retrieval problems.

Because once you hit real scale, the blast radius is not local.

1 wrong choice can slow writes, widen lock scope, increase lag, and make every region feel slower at once.

Because on some engines, secondary structures also affect commit latency or lock behavior.

Range scans and isolation choices can interact with index ranges and lock gaps.

Additional secondary paths can add commit work.

Because on a 200B-row table, “just add an index” is not a harmless sentence. It is a storage bill, a write tax, and a failure-mode decision. (MySQL Developer Zone)

I would recommend

Treat every production index like a product feature with an owner

query family

latency target

write-cost story

storage story

removal plan

What this does not mean

More indexes is not maturity.

Fewer indexes is not elegance.

The clean correction

The right indexes on the right paths is maturity.

An event ledger hits 200B rows, 40k writes/sec stay hot, 9 teams add their own read convenience, and replica lag turns “data platform” into “data apology”

Before

Every team adds a structure for its dashboard.

Writes slow.

Storage swells.

Lag creeps.

Everyone blames growth.

After

Keep only the serving paths tied to core product reads.

Push text, geo, and analytics into their right lanes.

I would recommend

Under-indexing kills reads.

Over-indexing taxes writes.

The trick is not courage.

It is ownership. (Apache Cassandra)

FAQ

“When should I add an index?”

When the same query repeatedly filters, joins, or sorts on the same fields and that path matters to latency, revenue, or habit.

If the table is tiny or the query is rare, save the ceremony. (MySQL Developer Zone)

“Should I add cache before index?”

I would not.

Cache on top of a table scan is still a table scan wearing cologne.

“What is the safest default?”

Balanced tree.

It handles equality, range, and ordering well and stays the default in mainstream relational engines. (PostgreSQL)

“When do I pick exact-match hash?”

When equality is the whole game and you truly do not care about range or sort.

That is rarer than people make it sound. (PostgreSQL)

“When do I pick the append-first write path?”

When writes dominate so hard that update-in-place becomes the bottleneck.

Think metrics, logs, clickstream, IoT, audit, event firehose. (Apache Cassandra)

“Can a composite

(lat, lng)path solve nearby search?”Better than 2 separate indexes, still not great.

It preserves ordered dimensions, not true 2D locality.

That is why nearby search usually needs a spatial path plus final distance filtering. (Redis)

“How do I handle a 5-mile radius?”

Find the center cell.

Include neighboring cells.

Fetch candidates.

Filter by true distance.

Return the final set.

The approximation gets you close. The distance filter makes it correct. (Redis)

“When do compound indexes help?”

When the query repeatedly uses the same leading filters and sort order.

Prefix order matters.

Wrong order means the planner may shrug and scan anyway. (PostgreSQL)

“When do covering paths help?”

When the query returns only a small set of columns and runs constantly.

Great for small hot reads.

Bad when you bloat every structure trying to be clever. (PostgreSQL)

“Can indexing solve cross-region consistency?”

No.

Indexing solves lookup path.

Consistency is a transaction and replication property. (Google Cloud Documentation)

“How do I handle new fields globally without blowing up reads?”

Add schema.

Backfill.

Build the new structure.

Verify with the plan.

Canary.

Watch write cost, storage cost, and lag.

Then cut over. (Google Cloud Documentation)

“What works at what scale?”

Local to regional product database

the mature balanced-tree relational path is usually the sharpest default

Existing primary-key-centric stack

the clustered primary-key path is fine if the org already runs it well

Global transactional read path with strict freshness

the globally consistent transactional path earns its keep

Write-ingest monsters

the append-first write-optimized path makes more sense than pretending a balanced OLTP engine wants that life (MySQL Developer Zone)

System Design

To Summarize:

Indexing is a paid shortcut, not free speed.

I start from the read path, not from the table.

For most product queries, the safe default is a balanced ordered path because it handles equality, range, and sort in 1 structure.

When writes dominate, I stop pretending a balanced OLTP path will save me and I move toward an append-first engine.

When the problem is location or text, I use geo or search retrieval because ordinary ordered keys are the wrong shape.

At scale, the real trade is read latency vs write cost vs freshness vs storage.

So the job is not adding indexes. The job is choosing the few that deserve to exist.